The hybrid cloud is not just a consideration, but for our customers, especially our larger customers, already a reality. To understand what we mean when we say hybrid cloud, we first need to define two terms:

- Hybrid Cloud: Uses mix of on-premises and one or more clouds.

- Multi-cloud: Uses two or more clouds.

Typically, companies are using both hybrid and multi-cloud architectures.

When we talk to our customers about hybrid cloud and or multi-clouds, we often hear the following:

- We want to use the best-of-breed cloud for a workload.

- We want to be cloud agnostic.

- One cloud provider does not serve all our locations.

- Some workloads need to stay on-premises, others can go to the cloud.

It all really boils down to one question: How do I architect my analytics for the hybrid cloud?

First, the advantages and disadvantages of hybrid cloud or multi-cloud environments need to be considered:

| Advantages |

Disadvantages |

| Special purpose clouds (e.g. Salesforce, SAP, …) |

Data movement between clouds / on-premises is slow and expensive |

| Better chance for a local data center |

Different stacks and proprietary technology on each cloud |

| Best-in-class cloud for specific workload |

Different costs and billing |

|

Better price for a specific workload

|

Expertise for different clouds needed

|

A key aspect when planning a hybrid cloud architecture is data movement between the clouds / on-premises. While moving data into the cloud is free, moving data out of the cloud can be expensive. In addition, communication in and out of the cloud adds considerable latency.

An analytic ecosystem begins with sources and ends with consumers. The analytics ecosystem itself has three tiers:

- Receive: Raw data lands here, and some standardization and cleansing are performed.

- Analyze: The reporting, the model training and model scoring are done in this tier.

- Serve: Here the data products are available in a format ready for consumption.

The figure below shows the analytical ecosystem with the tiers as described. It adds arrows, and the thickness of the arrows signifies the amount of data moving between the tiers:

.png)

As the figure above shows, the most cost-effective movement between the clouds or between on-premises and the cloud is between the Serve tier and the consumer. The bandwidth usage between the Analyze and the Serve tier is the second lowest.

Below we show two examples of ecosystem architectures that illustrate the data movement between the Serve tier and the consumer.

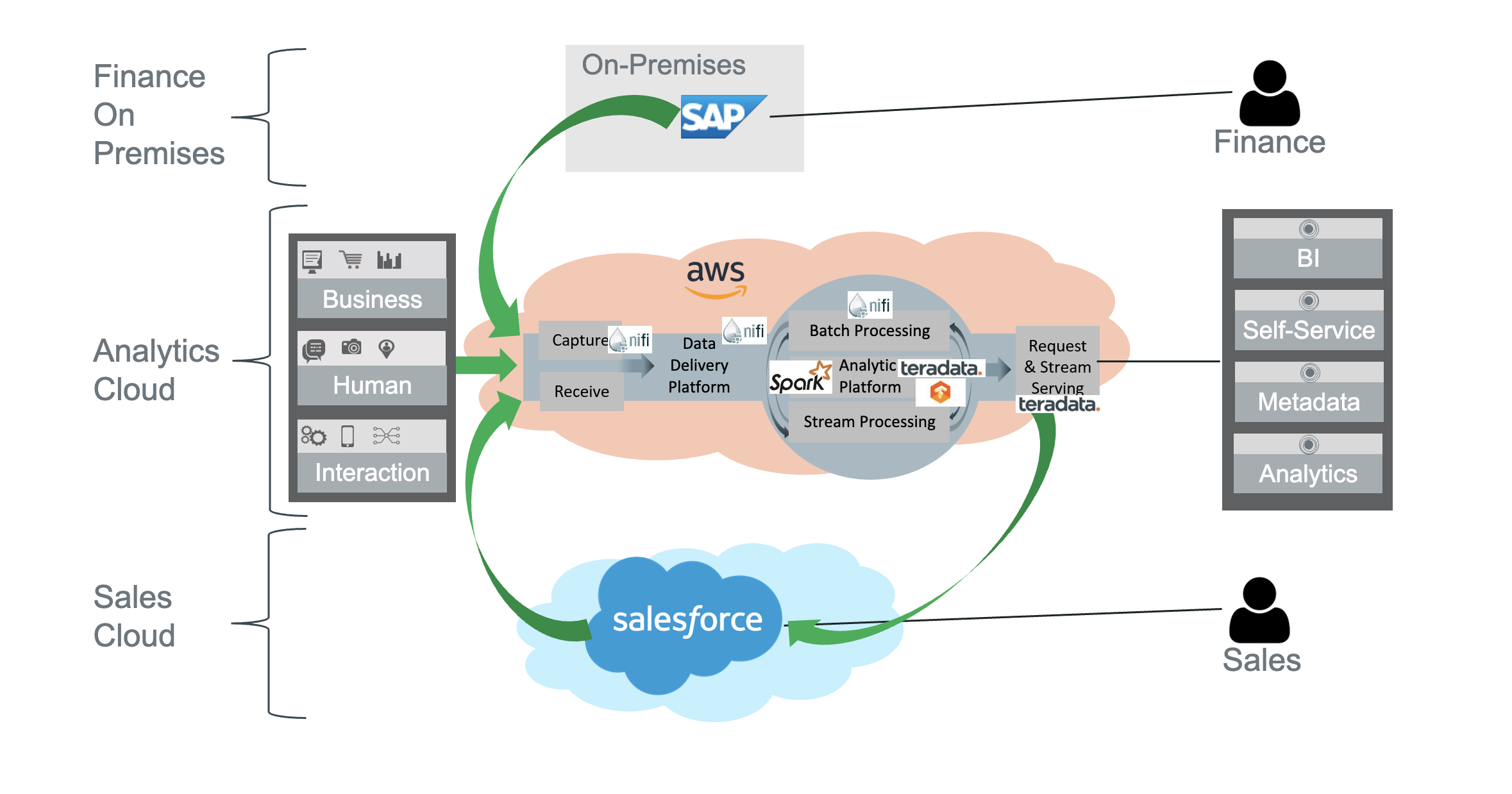

In the first example, sources are operational systems on-premises and in the Salesforce cloud. The tiers that receive, analyze and serve are all in the AWS cloud. Consumption happens on-premises:

Ingestion into the cloud uses a lot of bandwidth, but the cloud providers do not charge for this. The outbound bandwidth usage is minimized as only results are transferred to the consumer on-premises.

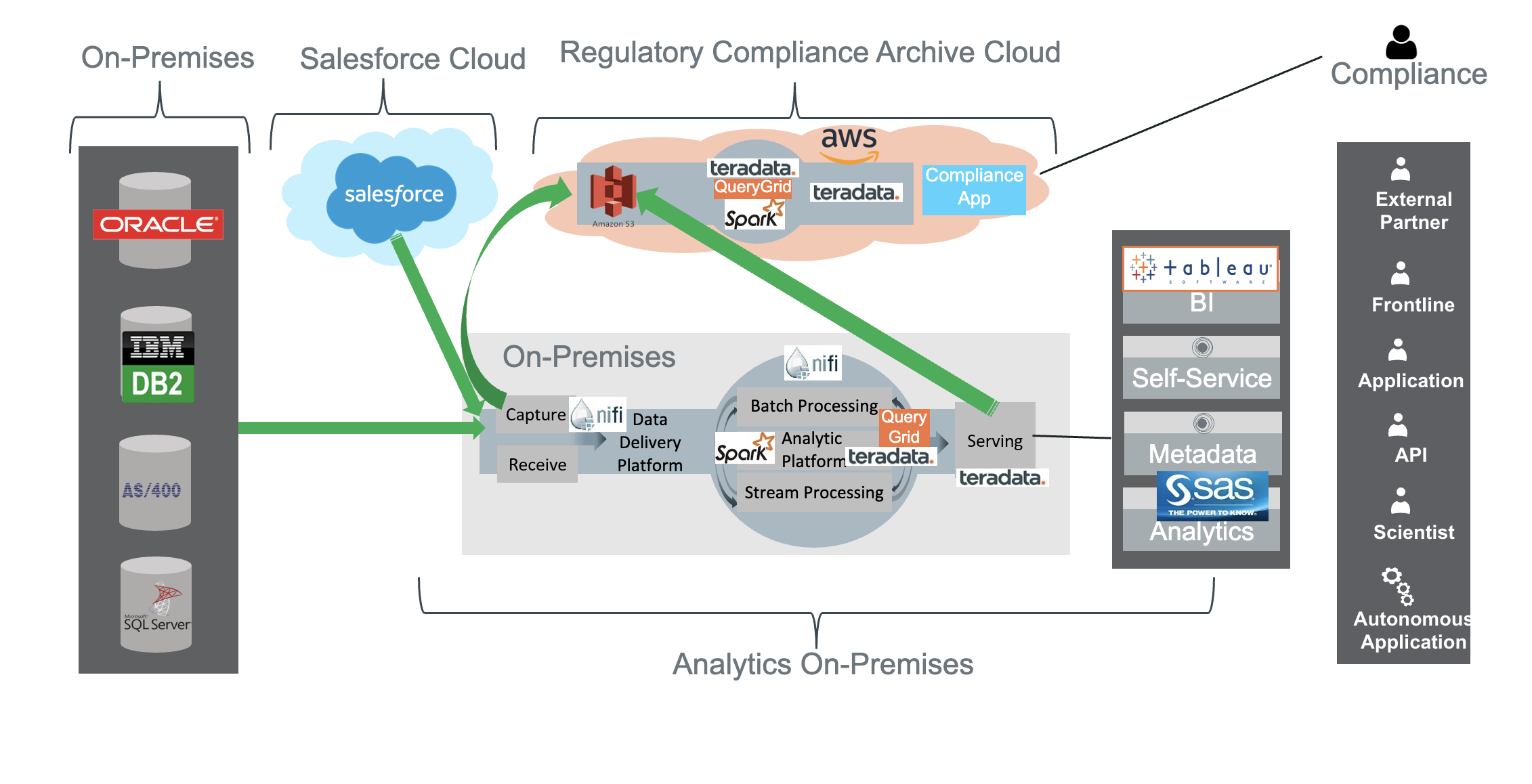

In the second example, the main analytics is done on-premises, as are the sources (with the exception of Salesforce) and the consumers. In addition, there is a regulatory and compliance system in the cloud to service use cases where historic data needs to be accessed which is no longer available in the main analytics system.

Like the first architecture shown, in the second architecture, the bandwidth usage is out of the cloud and therefore the cost is minimized.

To conclude, here is a list of best practices:

| Best Practice |

|

Minimize data transfer between clouds

|

-

Exporting data is costly

-

Bandwidth is limited

|

|

Minimize time-critical dependencies between clouds

|

|

|

Use applications as-a-service in the cloud

|

-

Salesforce

-

Office 365

-

SAP Financials

|

|

Use leading and established general purpose clouds

|

|

|

The same Governance rules apply to all clouds and on-premises

|

|

|

Use a dedicated connection to the cloud and in between clouds

|

-

Lower latency

-

Guaranteed bandwidth

-

More reliable

|

|

Use cloud-specific base SaaS

|

|

|

Use portable specialized services

|

|

|

Deploy custom code as Docker images with Kubernetes

|

-

Dev ops

-

More cloud agnostic

|

The key recommendation is that the analytic ecosystem can consist of multiple clouds and on-premises environments, but the core analytics should be done on a single cloud or on-premises, and it all boils down to the data centricity on the cloud context and a common tool chain for analytics.

For more information about this topic, check out the white paper, “De-Risking Hybrid, Multi-Cloud Analytics” and the webinar, “Moving Toward a Modern Analytics Architecture”.

(Author):

Tomi Schumacher

Tomi Schumacher is an Eco System Architect/Principal Data Engineer with over 20 years of experience in IT. Tomi has a wide range of experiences: he worked as a consultant, architect, manager and Independent contractor both in the US and in Switzerland for various industries. He is involved in the whole project management live cycle, and he worked on backend services, various databases, web frontends and mobile apps. Tomi worked on private (VMWare) and in public clouds.

View all posts by Tomi Schumacher