Many Teradata customers are interested in integrating Teradata Vantage with Microsoft Azure First Party Services. This guide will help you to connect Teradata Vantage using the Native Object Store feature to query Salesforce data sourced by Azure Data Factory.

The procedure offered in this guide has been implemented and tested by Teradata. However, it is offered on an as-is basis. Microsoft does not provide validation of Teradata Vantage using Native Object Store capability with Azure services.

This guide includes content from both Microsoft and Teradata product documentation.

Overview

This guide describes the procedure to query customer information from Salesforce and combine it with order and shipping information from Vantage for further analysis.

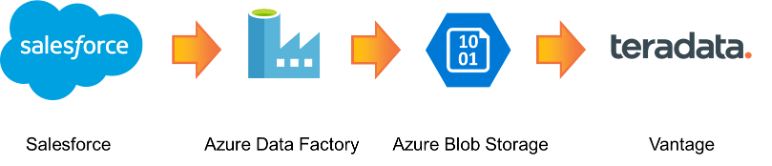

Azure Data Factory transfers the customer data from Salesforce to Azure Blob Storage. Teradata Vantage then uses its Native Object Store (NOS) feature to join the data on Blob Storage with data in Vantage in single query.

This is a diagram of the workflow.

About Azure Data Factory

Azure Data Factory is the cloud-based ETL and data integration Azure service that allows you to create data-driven workflows for orchestrating data movement and transforming data at scale. Using Azure Data Factory, you can create and schedule data-driven workflows (pipelines) that can ingest data from disparate data stores.

Azure Data Factory supports 90+

connectors to choose from to source and sink targets including – Azure Blob Storage, Teradata Vantage, Salesforce and Snowflake.

See

documentation for more information on Azure.

About Teradata Vantage

Vantage is the modern cloud platform that unifies data warehouses, data lakes, and analytics into a single connected ecosystem.

Vantage combines descriptive, predictive, prescriptive analytics, autonomous decision-making, ML functions, and visualization tools into a unified, integrated platform that uncovers real-time business intelligence at scale, no matter where the data resides.

Vantage enables companies to start small and elastically scale compute or storage, paying only for what they use, harnessing low-cost object stores and integrating their analytic workloads.

Vantage supports R, Python, Teradata Studio, and any other SQL-based tools. You can deploy Vantage across public clouds, on-premises, on optimized or commodity infrastructure, or as-a-service.

Teradata Vantage Native Object Store (NOS) can be used to explore data in external object stores, like Azure Blob Storage, using standard SQL. No special object storage-side compute infrastructure is required to use NOS. You can explore data located in a Blob Storage container by simply creating a NOS table definition that points to your bucket. With NOS, you can quickly import data from Blob Storage or even join it other tables in the database.

See the

documentation for more information on Teradata Vantage.

Prerequisites

You should be familiar with Azure concepts, Azure Data Factory, Salesforce, and Teradata Vantage.

You will need the following accounts and systems:

Salesforce account (you can

sign up for a developer account) with API access. API access is enabled by default for Enterprise, Unlimited, Developer, and Performance editions. For more information, see

Enable API access in Salesforce by permission set.

Procedure

These are the steps to connect Teradata Vantage to Salesforce using Azure Data Factory.

- Create a Copy Data pipeline job in Azure Data Factory

- Connect Teradata Vantage to data in Azure Blob Storage

- Explore data in Azure Blob Storage using Native Object Store

Create a Copy Data pipeline job in Azure Data Factory

This step outlines how to use the Copy Data tool in Azure Data Factory to copy data from Salesforce to Azure Blob Storage. For this example, I’m using a

Salesforce developer account to connect to Salesforce.

For more information see

Quickstart: Use the Copy Data tool to copy data article.

Open Azure Data Factory UI

Logon to

Azure portal and open Data Factory instance.

Select the Author & Monitor tile to start the Azure Data Factory user interface application on a separate tab.

Start the Copy Data tool

Switch to the

Data Factory .png)

page.

Select the

Copy Data tile to start the Copy Data tool.

.png)

On the Properties page, specify a name for the pipeline and its description. Click Next.

.png)

Create a connection to Salesforce

On the Source data store page, click + Create new connection to add a connection.

.png)

Select Salesforce from the gallery. Click Continue.

.png)

On the New linked service (Salesforce) page, enter a name for the linked service, an environment URL (e.g. https://login.salesforce.com), a user name, a password, and a security token for Salesforce access. Click Create.

.png)

See Get a security token for instructions on how to get and reset a security token.

See Copy data from and to Salesforce by using Azure Data Factory for more information on using Azure Data Factory with Salesforce.

On the Source data store page, choose the previously created connection to Salesforce. Click Next.

.png)

Choose the Existing Tables object and click the Account item. Click Next.

.png) On the Apply filter page, choose default. Click Next.

On the Apply filter page, choose default. Click Next.

Create a connection to Azure Blob Storage

On the Destination data store page, click + Create new connection.

On the New linked service page, click on Azure Blob Storage. Click Continue.

.png)

On the New linked service (Azure Blob Storage) page, enter the authentication method (e.g. account key).

Choose From Azure subscription for the Account selection method.

Select your Azure subscription and storage account name. Click Create.

.png)

On Destination data store page, choose the previously created connection to Azure Blob Storage. Click Next.

.png)

On the Choose the output file or folder page, click Browse.

Choose the container you created in your blob storage account. Click Choose.

Enter a file (e.g. salesforce). Click Next.

.png)

Because this article did not evaluate parameters Max concurrent connections and Block size see Blob storage as a sink type for more information on the connections and block size parameters.

On the File format settings page, set file format settings for output file (e.g. JSON). Click Next.

.png)

Azure Blob Storage supported file formats are text, Avro, ORC, JSON, and Parquet. NOS supports the JSON, CSV, and Parquet formats. For more information, see Teradata Vantage™ - Native Object Store Getting Started Guide Release.

On the Schema mapping page, select all of columns. Click Next.

.png)

On the Settings page, choose the default settings (e.g. blank). Click Next.

.png)

The parameters Enable staging, Data integration unit, and Degree of copy parallelism are beyond the scope of this guide. See Copy performance optimization features for more information.

On the Summary page, review all of the settings. Click Next.

.png)

On the Deployment complete page, click Monitor to view job progress or click Finish if job completed successfully.

.png)

Connect Teradata Vantage to data in Azure Blob Storage

Native Object Store (NOS) can explore and analyze data in Azure Blob Storage. This section lists a few commonly used functions of NOS. Detailed information is available in Native Object Store – Teradata Vantage Advance SQL Engine 17.0 (Orange Book).

NOS can make a permanent connection with an object, using a foreign table, or a temporary connection with an operator in a SQL command.

Create a Foreign Table

A foreign table allows the external data to be easily referenced within the Vantage SQL Engine in a structured, relational format.

Login to Teradata Vantage system.

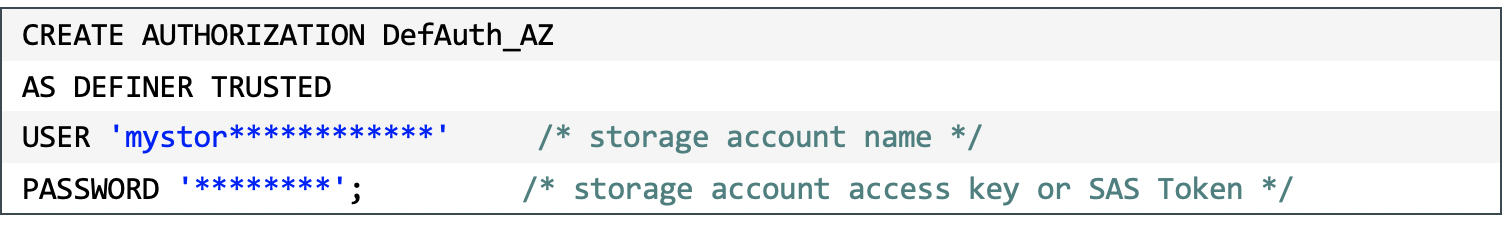

Create an AUTHORIZATION object with access keys for Blob Storage container access. The USER parameter is the storage account name and the PASSWORD parameter is the access key or SAS token.

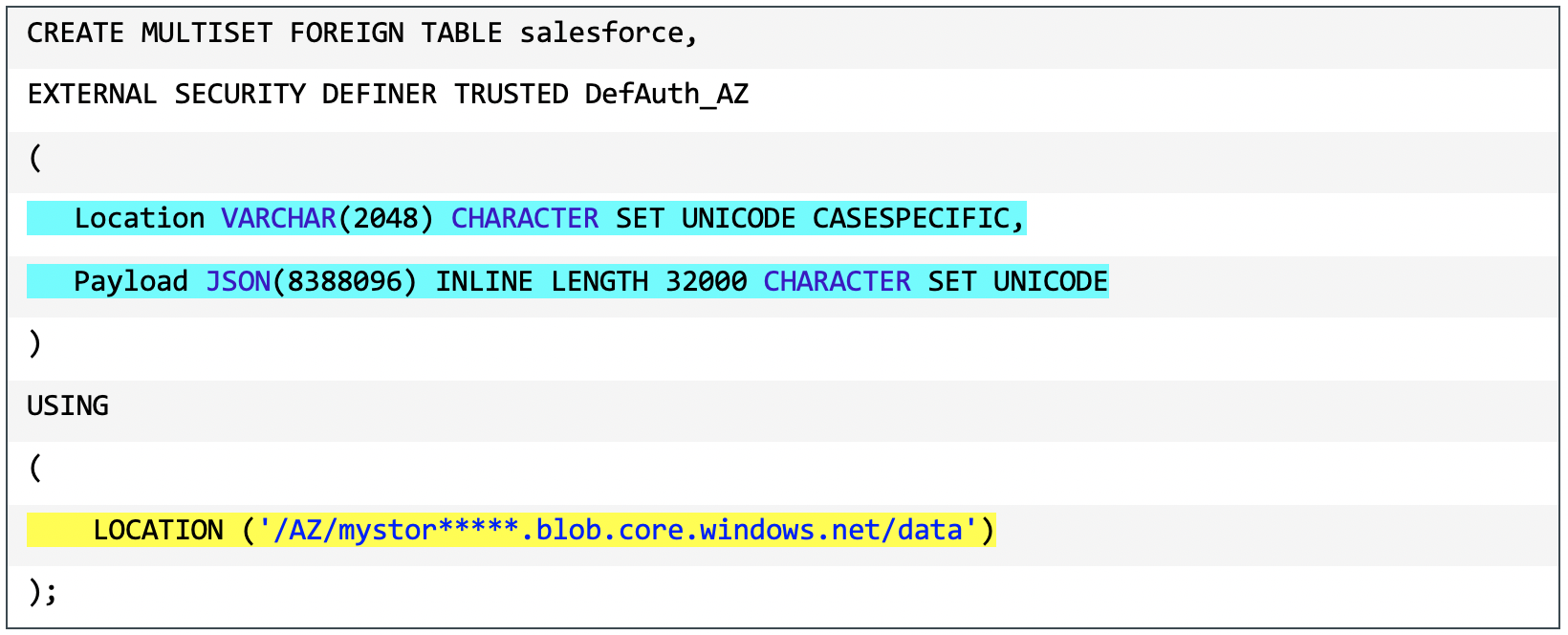

Create a foreign table on the JSON file on Blob Storage. Include the AUTHORIZATION object previously created.

The LOCATION parameter, highlighted in yellow, references the top-level, single name of the container in Azure Blob Storage.

If the file name does not have standard extension (e.g. “.json”, “.csv”, “.parquet”), then columns definitions for Location and Payload are required, highlighted in blue, to indicate the type of the data file.

Foreign tables are always defined as No Primary Index (NoPI) tables.

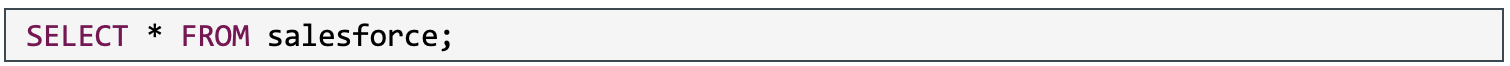

You can use standard SQL queries on foreign tables.

Partial output from “SELECT * FROM salesforce;”.

Partial output from “SELECT * FROM salesforce;”.

.png)

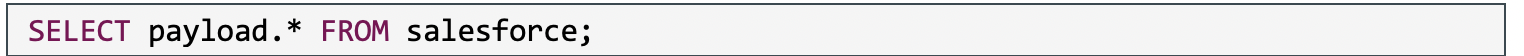

Partial output form “SELECT payload.* FROM salesforce;”.

.png)

The Location column is the address in the object store system. The payload column contains the data itself. Each record in the object is a separate JSON object and is returned in a separate row.

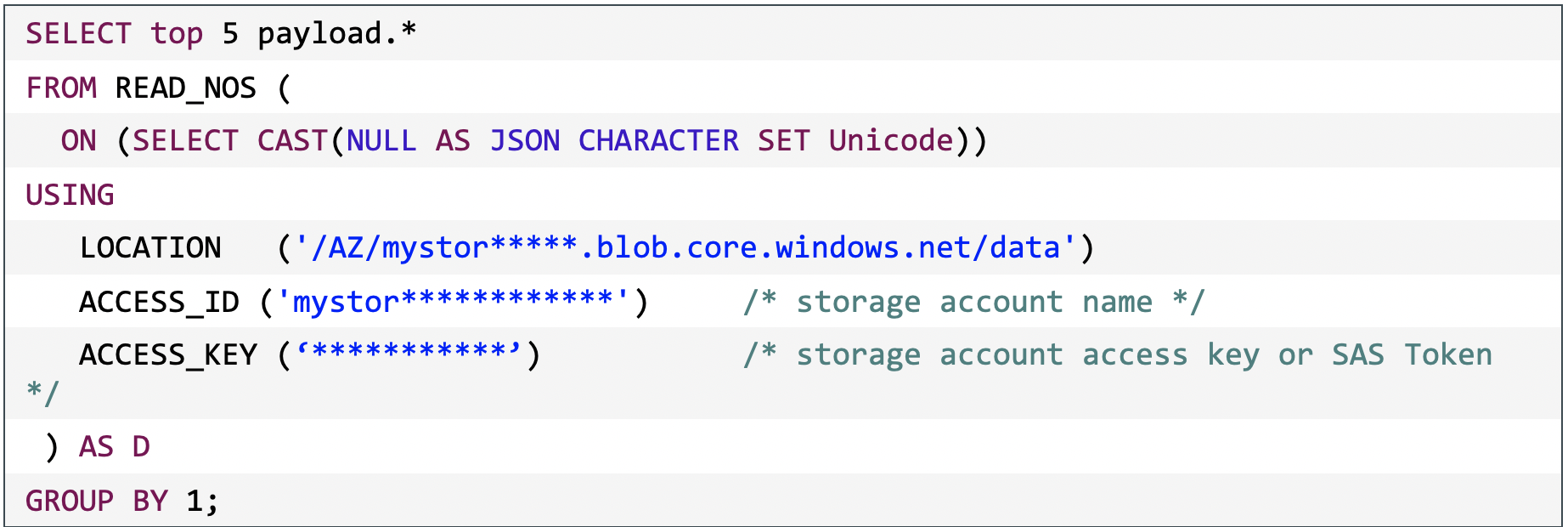

Use the READ_NOS table operator

The READ_NOS table operator can be used to sample and explore a percent of the data without having to define a foreign table.

This is partial output from the above SQL query with the READ_NOS table operator.

This is partial output from the above SQL query with the READ_NOS table operator.

.png)

Explore data in Azure Blob Storage using Native Object Store

Find JSON keys in JSON data

JSON data can contain different attributes in different records. The JSON_KEYS table operator will determine the full list of possible attributes in a data store.

This is partial output from the above SQL query with the JSON_KEYS table operator.

This is partial output from the above SQL query with the JSON_KEYS table operator.

.png)

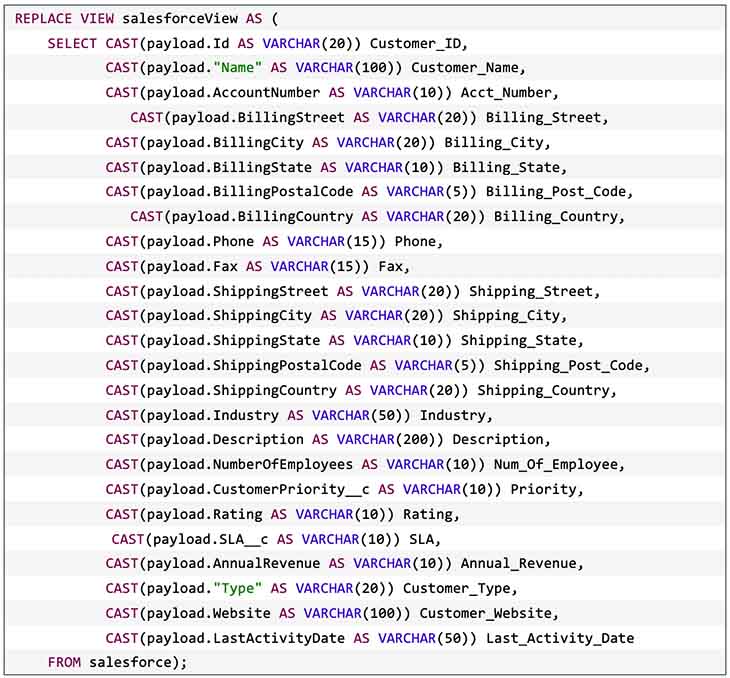

Create a simplified view

Views can simplify the names associated with the payload attributes in a JSON object. A view hides the Location references and makes the Payload column look like normal columns.

The following is an examplar view statement. The columns were discovered using the JSON_KEYS table operator.

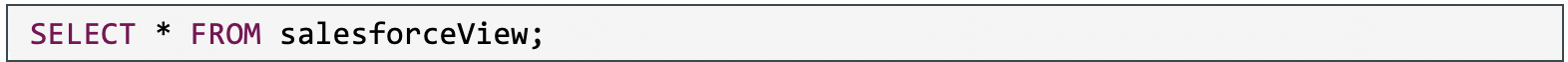

The data in the JSON object can now be queried in a friendly format.

This is the partial output from the above view.

.png)

Join data from Azure Blob Storage to Data in Vantage

Foreign tables can be joined with normal tables in Vantage for further analysis.

For example, a Vantage instance has three tables for order, order items, and shipping addresses.

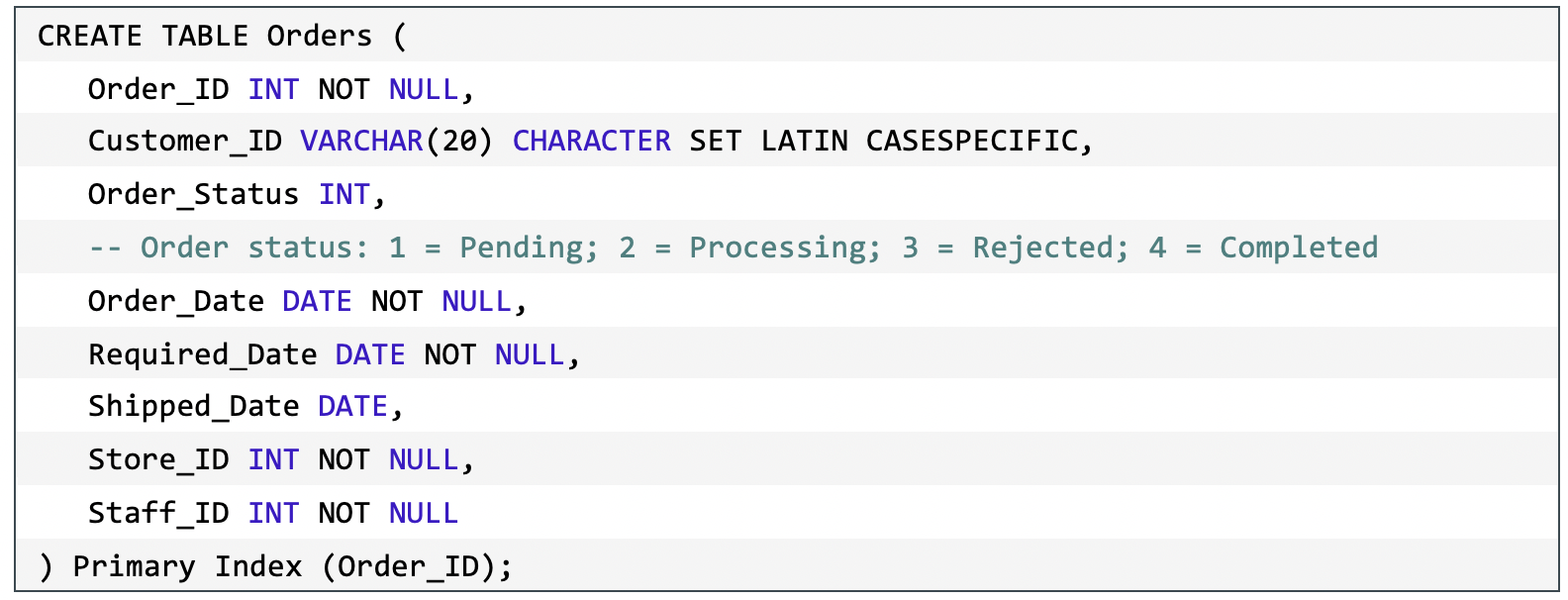

The table definition for the Orders table.

.png) The table definition for the Order Items table.

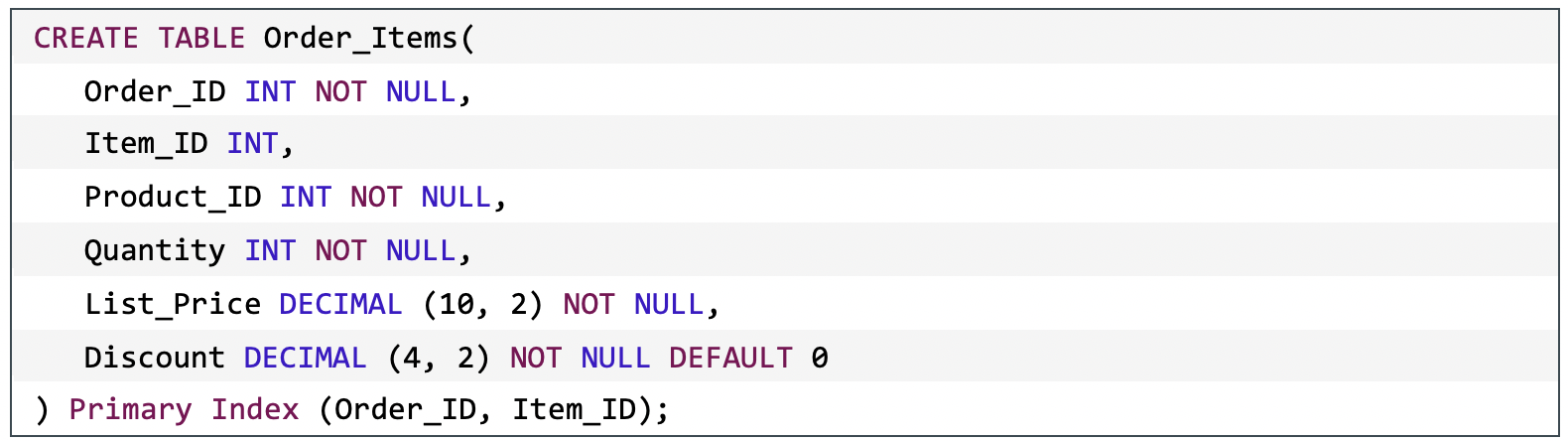

The table definition for the Order Items table.

.png)

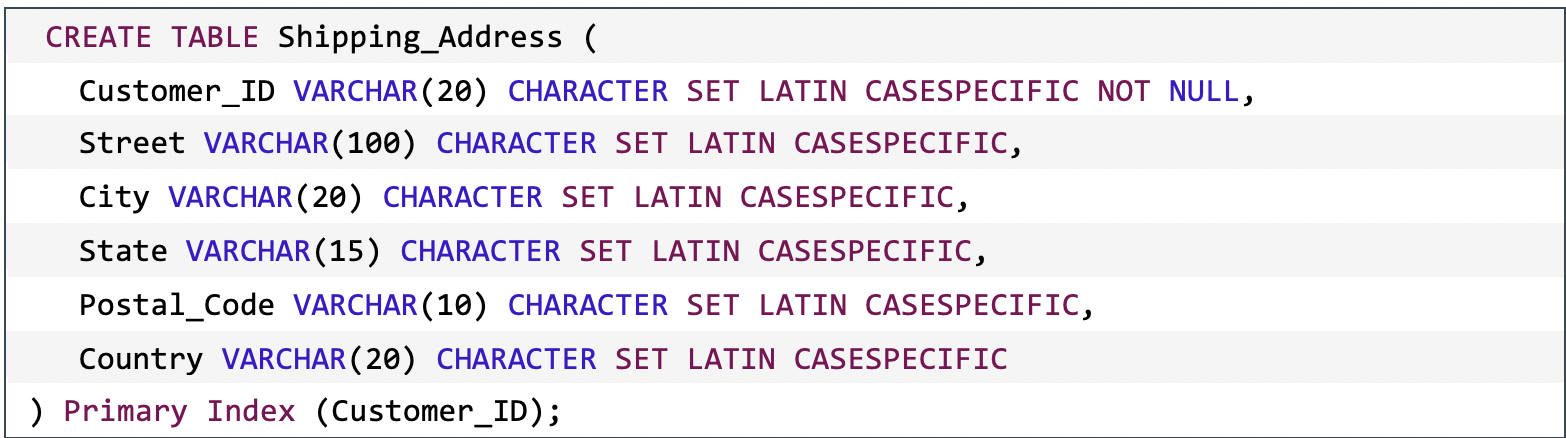

The table definition for the Shipping Addresses table.

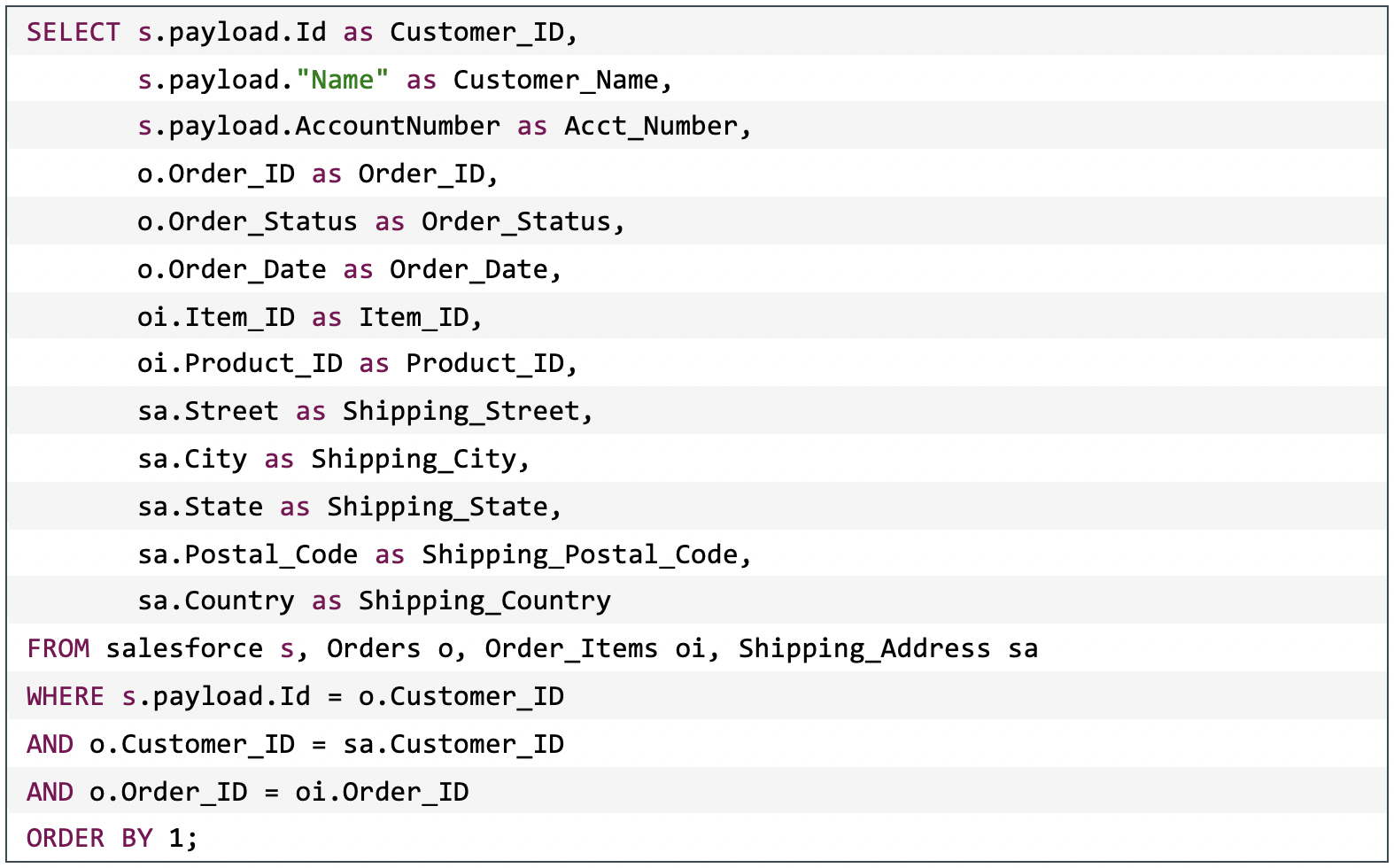

.png) The foreign table, Salesforce, can now be joined to the Orders and Orders Items tables to seamlessly query a customer’s order information.

The foreign table, Salesforce, can now be joined to the Orders and Orders Items tables to seamlessly query a customer’s order information.

Output from the above query with the foreign table.

.png)

Load Data from Azure Blob Storage into Vantage

A persistent copy of the Blob Storage data can be useful when repeated access of the same data is expected. A foreign table and the READ_NOS do not automatically make a persistent copy of the data queried from Azure Blob Storage.

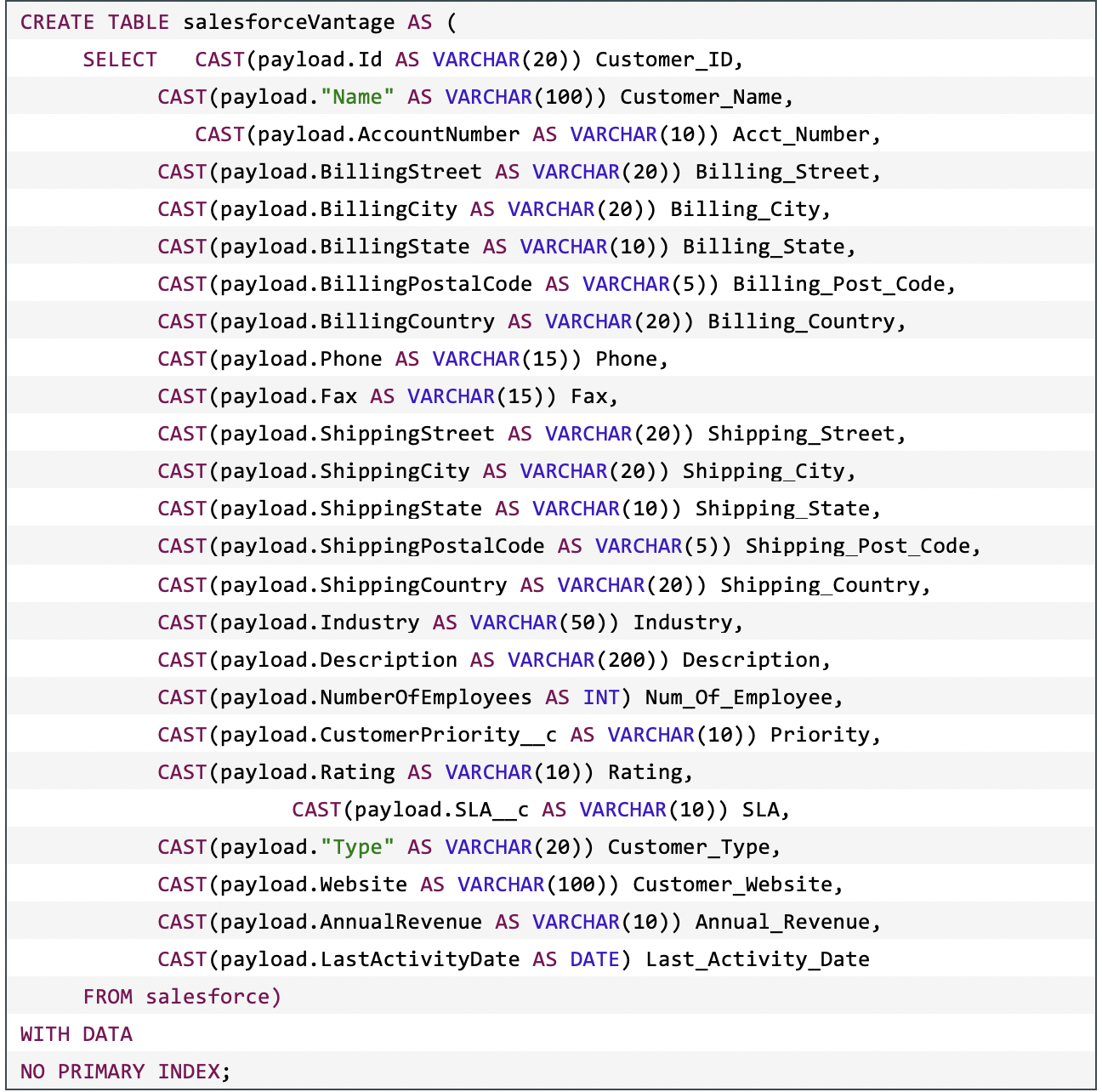

Use CREATE TABLE with a foreign table

A table can be created by querying a foreign table or, preferably, a simplified view over the foreign table. When using a simplified view, each attribute will become a column in the relational table.

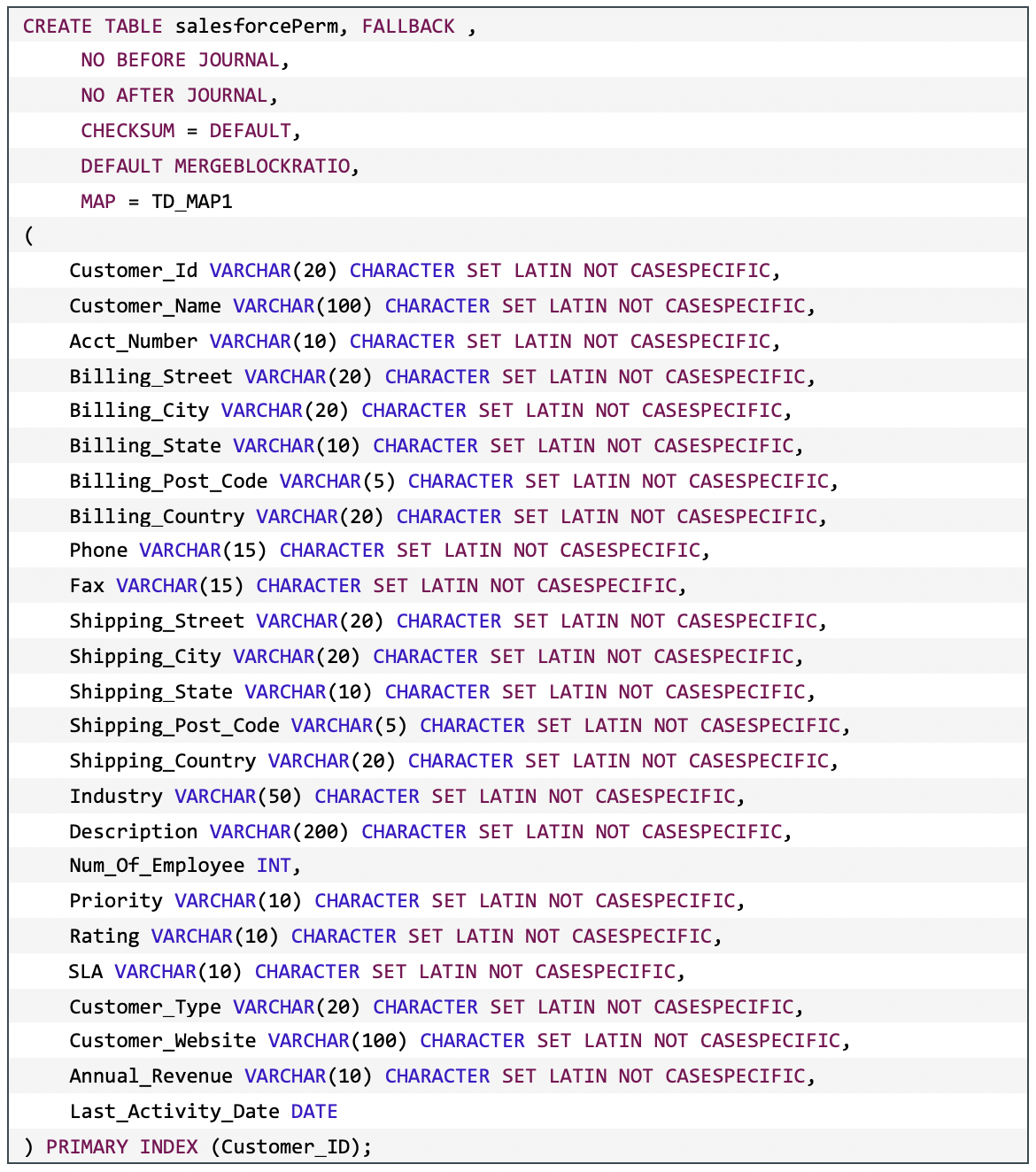

This is an exemplar create table statement.

Once the table is created and loaded, it can be queried like any normal table.

Once the table is created and loaded, it can be queried like any normal table.

This is the partial output from the previous query.

.png)

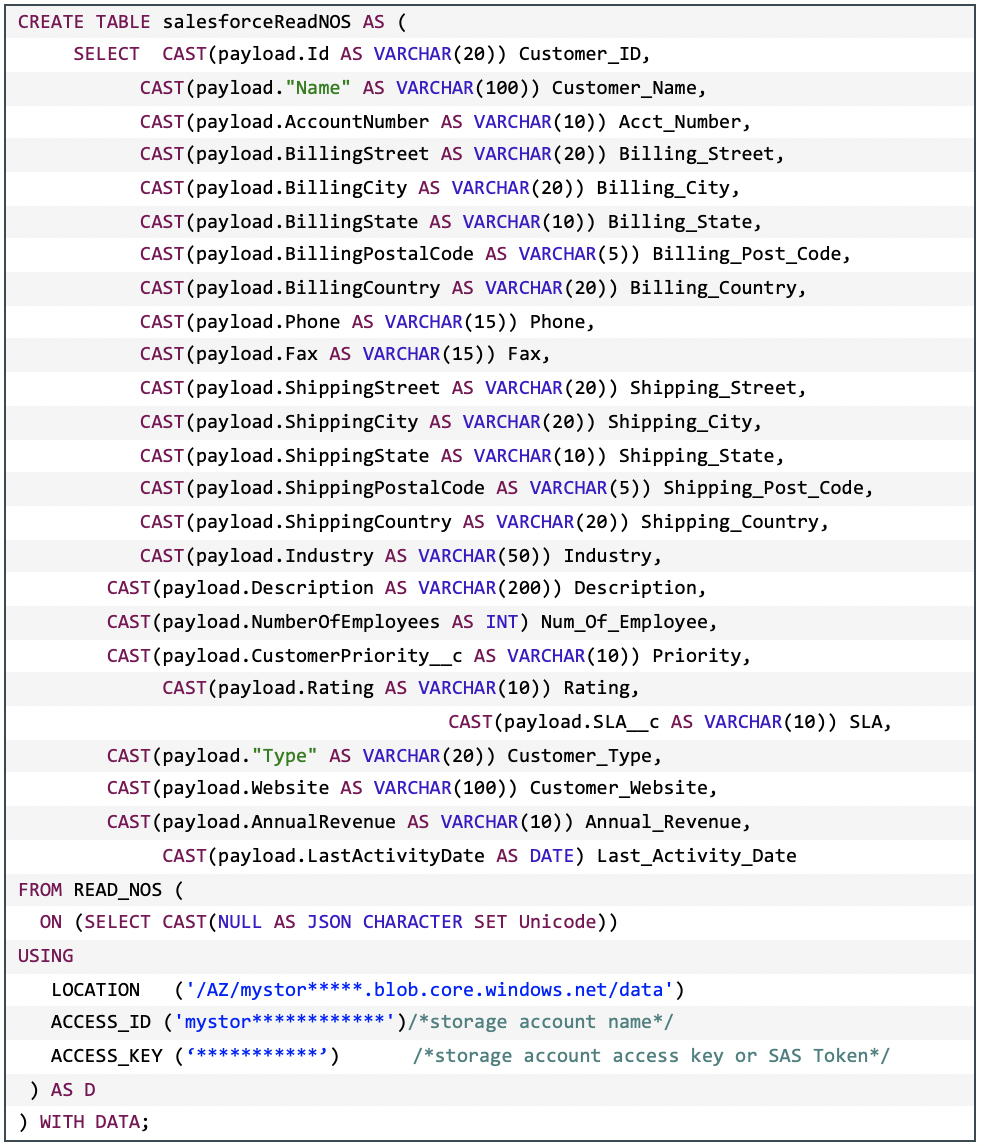

Use CREATE TABLE with the READ_NOS table operator

An alternative to using foreign table is to use the READ_NOS table operator. This table operator allows you to access data directly from an object store without first building a foreign table. You can combine the

READ_NOS table operator with a CREATE TABLE clause to build a persistent version of the data in the database.

This is an exemplar create table statement.

Once the table is created and loaded, it can be queried like any normal table.

Once the table is created and loaded, it can be queried like any normal table.

This is the partial output from the previous query.

.png)

Create a table and then INSERT SELECT

The previous options of loading data combine the creation of the table with the querying of the object data. Another option is to create the table and then load the table. This allows for successive additions of data into the relational table as well as more control over the definition of the relational table. For example, the relational table can be defined as MULTISET.

This is an exemplar create table statement.

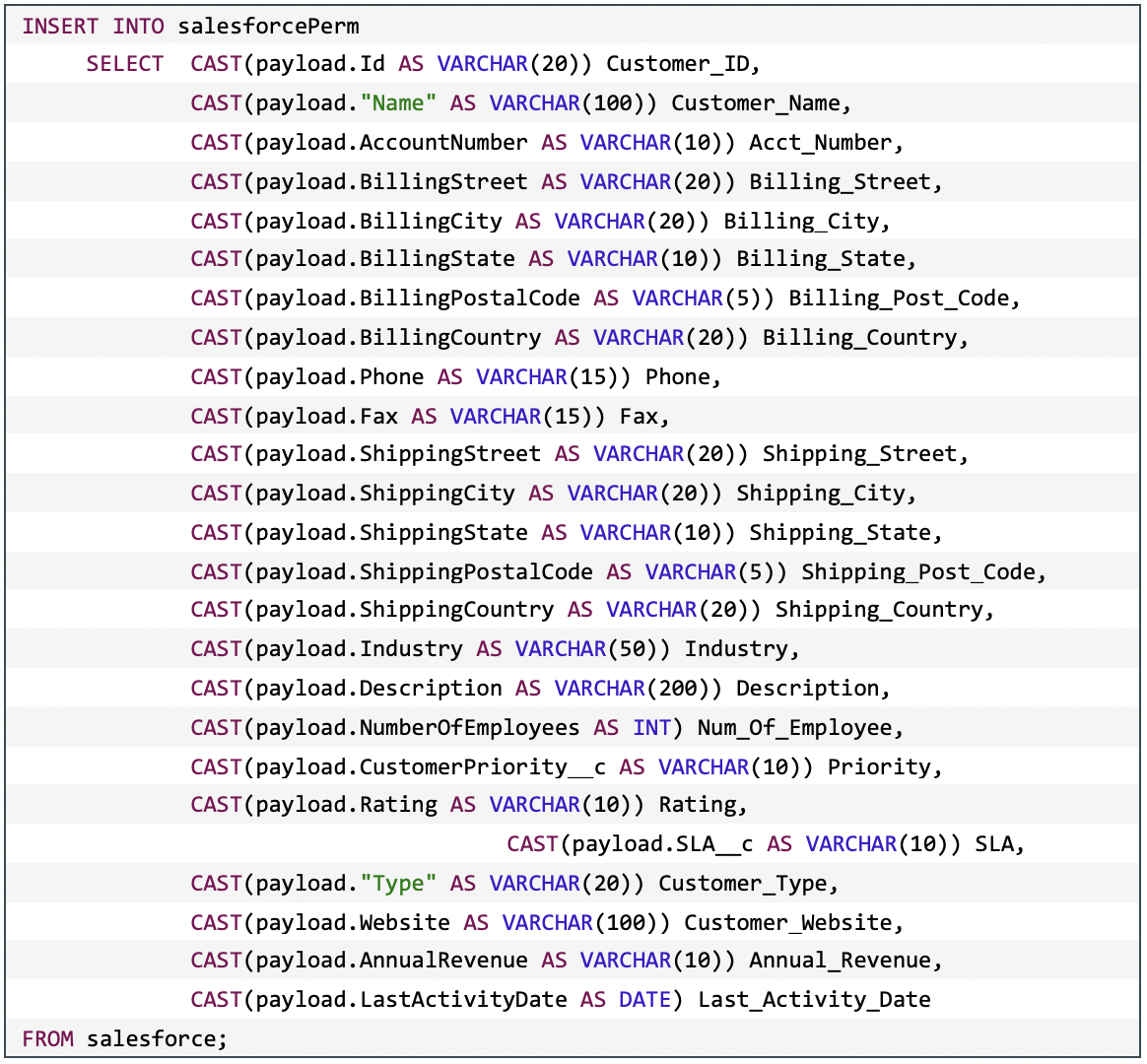

This is an exemplar INSERT SELECT statement.

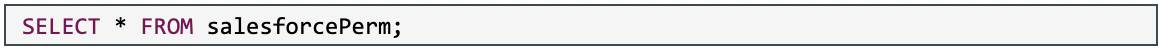

Once the table is created and loaded, it can be queried like any normal table.

This is the partial output from the previous query.

This is the partial output from the previous query.

.png)