Using existing Capabilities when executing your long-term Strategy

Organisations are increasingly taking a position on their future strategy for data and analytics. They particularly consider where these are used to drive strategic business decisions. Accordingly, many organisations now have a – sometimes even publicly stated - position on Cloud, which is somewhere on a spectrum from “active cloud adoption”, through “cloud first mandate”, “cloud first strategy”, “cloud curious” to having ruled out cloud for the time being.

On the other hand, many solution providers have articulated a host of perceived benefits for cloud or hybrid strategies, and it is clear to see how - at least on paper - these benefits could manifest. However, as with any material change in strategy, a host of challenges exist and I’d like to explore one area which I think has been largely missed in the articles and literature I have read to date, namely the lack of a clearly defined strategy on how to actually make the move to cloud happen in a series of manageable steps that will not derail the existing analytical capabilities of the business.

Experience has shown that many grand plans to make sweeping changes to analytic infrastructures fail, not because they are too ambitious or even in themselves particularly complex but because they have not properly taken into account the need to run and extend existing analytic capabilities in the meantime. I propose using a flexible roadmap approach which allows change to be managed coherently and lessons learned from each step to influence the future approach.

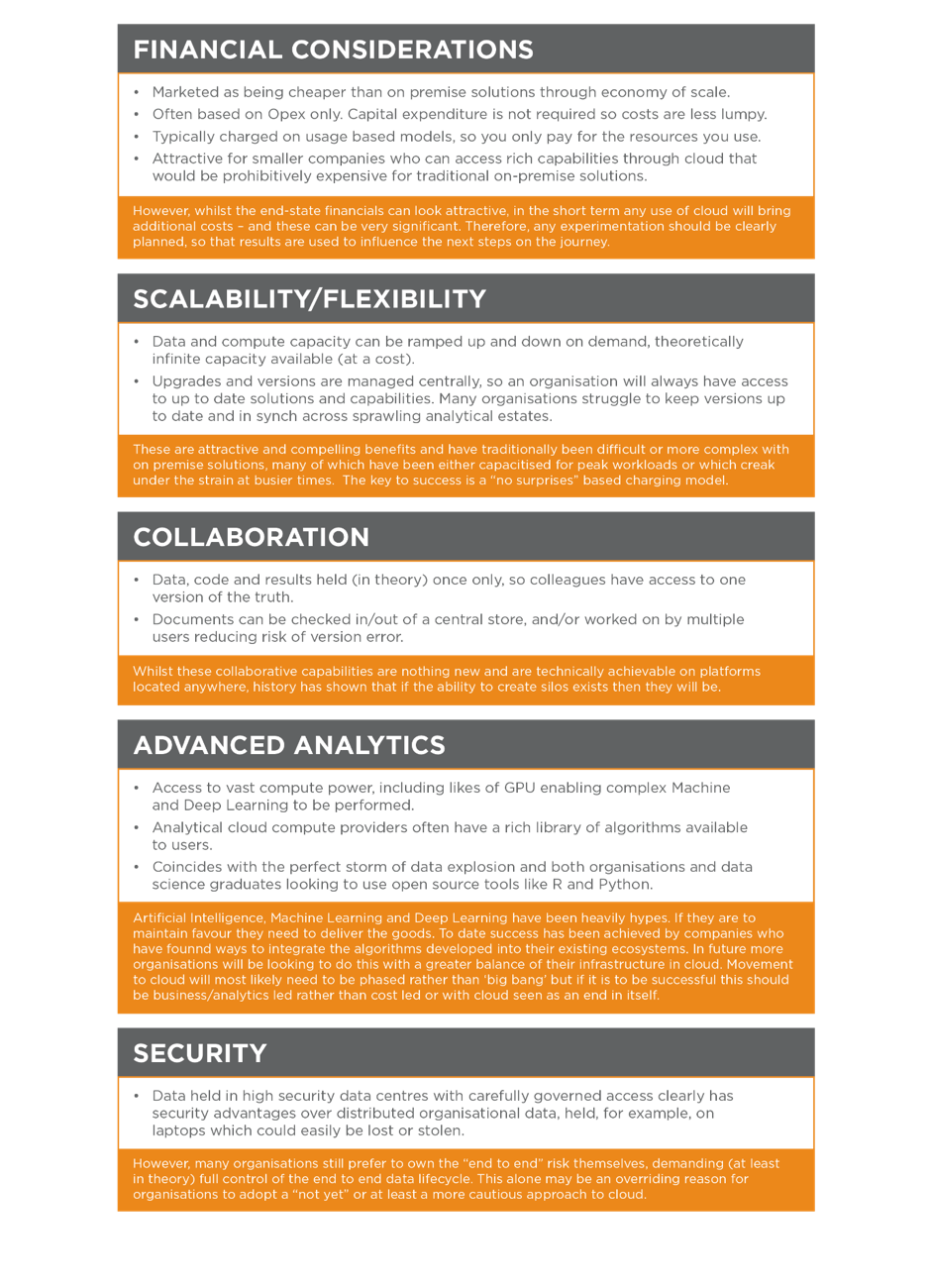

Factors Influencing Cloud Strategies

In order to understand what its cloud strategy should look like, an organisation should have a clearly articulated set of goals and a set of decision-making factors. Those factors must be prioritised based on their respective importance to these goals. Obviously, both the market and the strategic priorities of the organisation itself, these need to be reviewed and adapted. Therefore, it is particularly beneficial if an evolutionary roadmap is adopted rather than a “legacy bad: future state good” one. This is especially true because in the field technology, the future state is evolving so rapidly and an organisation’s needs inevitably needs will also develop. The table below explores some of the factors which are influencing peoples thinking today.

In the end it’s all about the best technology, isn’t it?

The argument that “it doesn’t matter where my data is, just give me the tools to do the job” holds a great deal of water on paper. However, as we know from experience it is never that simple. In Financial Services for instance, like many industries, analytic users probably have greater workload pressures than ever before. They are often working with systems operating at close to full capacity and with a restricted set of analytic tools. Getting the “day job” done is therefore incredibly challenging and yet in many instances they are being asked to embrace a cloud strategy which appears to have been determined using a restricted set of decision factors.

To achieve success therefore it is vital that decisions are taken using a broad base of parameters. Ideally this is done in such a way that as many options as possible are kept open along the way. The user community should, in my opinion, be active and vociferous in ensuring that any strategy:

-

Meets their needs in terms of providing the data in the right format, and at the right quality. It should provide a viable path to accessing to best in class tools for analysis and production analytics alongside the data allowing silo proliferation and endless data movement to be eliminated.

-

Does NOT add additional data moving or data wrangling burden. Indeed, they should insist that ways to reduce this burden are explored. In many instances well over 60% of people’s time is spent on making data fit for purpose, and perhaps 10% or more doing post analytic quality and reconciliation checks. Investment in presenting a strong pool of industrialised production class data for immediate consumption should be a key goal, with more exotic data items being held in a more cost-effective form until analytics proves their worth.

OK so what does this roadmap look like?

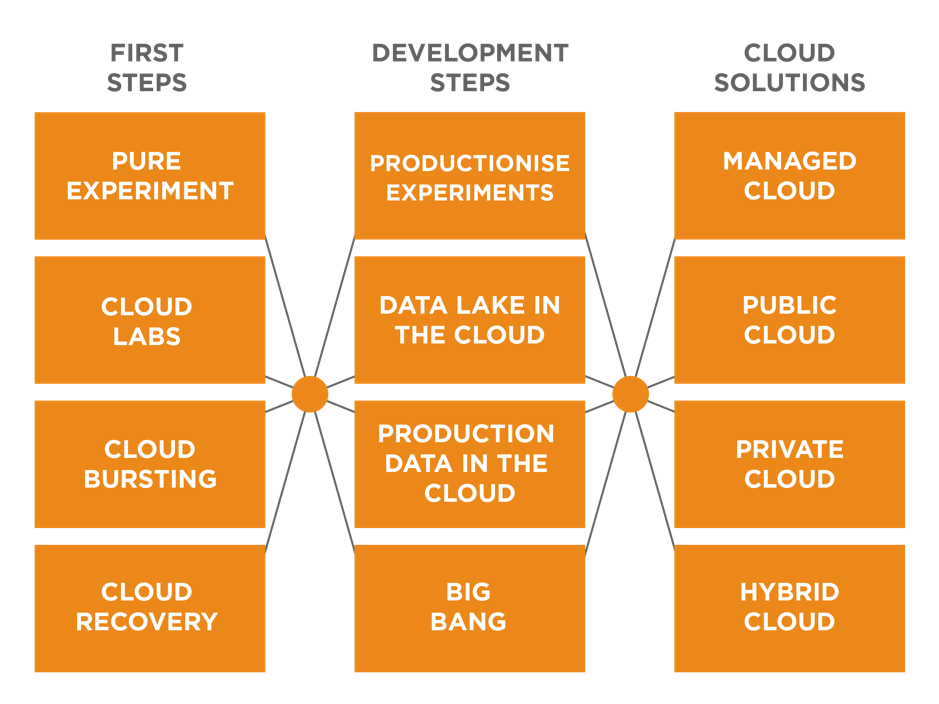

Any journey starts with the first step, but in this case, there may be many routes to success. The table below outlines some of the steps which could be chosen. Each organisation must choose a path that it is right for it, but more importantly should be able to change its route as it learns more, or as the market itself develops.

.png)

I would recommend taking a small measured step first, but by setting goals and evaluation criteria it should be possible to measure success and determine the next best step. The suggested first steps outlined above may be worthy of consideration:

Step 1 – The “cloud curious” organisation

Pure Experiment – This is arguably the simplest way to start and would involve setting up a series of exercises to test the viability of a cloud solution. By starting simple it is possible to progress through layers of complexity at low cost. It is important however that a few key pointers are borne in mind:

-

Evaluation should aim to cover full data lifecycle from ingestion to consumption

-

Analytic tools and AI experiments should have a viable route to production

-

As with any change from the status quo the total cost of ownership, including opportunity costs/benefits from insights and analytics need to be accounted for

Cloud Labs – Conceptually a “sandbox in the clouds”, an environment where users can create and analyse data. It has the benefits of being easily extensible and in some ways it requires less formal administration than on-premise sandboxes as it does not compete with production data/jobs. It is perhaps the next logical step from experimental usage, but costs can easily escalate and need careful management.

Step 2 –The already “joined up” company.

Cloud Bursting – In this scenario certain activities, workloads or transient data can be sent to the cloud as and when required. This allows an on-premise box to be managed close to capacity, and cloud to be spun up and used at peak demand.

Cloud Recovery – Set up a disaster recovery environment in the cloud, which can be delivered through economy of scale at a lower cost than maintaining your own DR centre. By maintaining consistency management and maintenance of the primary.

So, what’s next?

To round off, here is a summary of recommendations to give you the best chances of success.

.png) At Teradata we are happy to support you and your organisation developing a flexible roadmap leading your way to analytics in the cloud.

At Teradata we are happy to support you and your organisation developing a flexible roadmap leading your way to analytics in the cloud.

You will find more information on what we do and how we can help you to move your analytics to the cloud on https://www.teradata.com/Products/Cloud.

Simon Axon leads the Financial Services Industry Consulting practice in EMEA. His role is to help our customers drive more commercial value from their data by understanding the impact of integrated data and advanced analytics. Prior to taking up his current role, Simon led the Data Science, Business Analysis & Industry Consultancy practices in the UK & Ireland, utilising his diverse experience across multiple industries to understand our customer’s business and identify opportunities to leverage data and analytics to achieve high-impact business outcomes. Before joining Teradata in 2015, Simon worked for the Sainsbury's Group and CACI Limited.

View all posts by Simon Axon